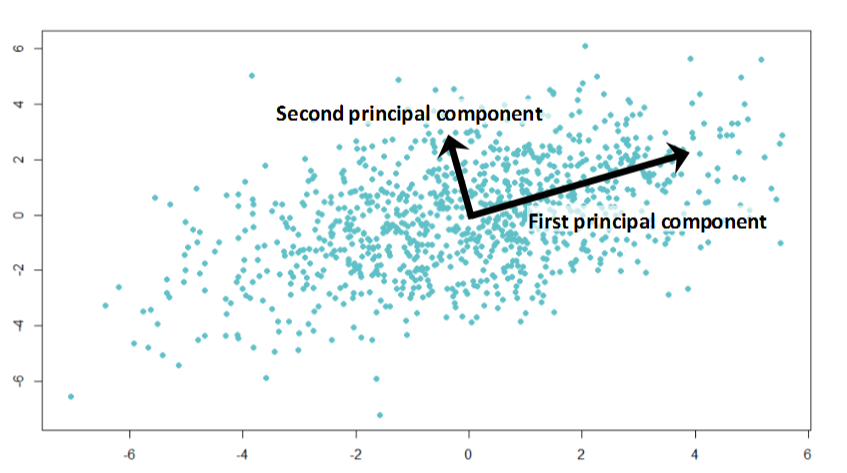

Here we are using Jupyter Lab of Anaconda Distribution. The independent variables are the input data that we have, with which we want to predict something and that something is the dependent variable.įirst we import the necessary Python Modules into the IDE (Integrated Development Environment). The column named Beer Grade is the dependent variable (what we want to predict) as it explains the quality of the beer as to which grade it falls in 1, 2 or 3 grade. These columns explain the properties of the Beer also called as the independent variables (also called as the input features). In the below section, we will look at step by step approach to apply the PCA technique to reduce the features from a sample high dimensional dataset.īelow is the sample 'Beer' dataset, which we will be using to demonstrate all the three different dimensionality reduction techniques (PCA, LDA and Kernel - PCA). Sometimes we can have datasets with hundreds of features, so in that case we just want to extract much fewer independent variables that can explain the most variance in the dataset. PCA reduces dimensions(features) in the dataset by looking at the correlation between different features. It is to simplify the model while maintaining the relevance and performance of the model. The true purpose of PCA is mainly to decrease the complexity of the model. In this article, we will look at three of the most commonly used dimensionality reduction techniques. However, these techniques can also be very useful for low dimensional (having fewer number of columns) dataset as well. To overcome this, we can reduce the number of columns in the dataset using dimensionality reduction techniques. If we are new to the dataset, then it becomes extremely difficult to find the patterns within that dataset due to the complexity that comes with the high dimensional datasets. When we have many columns in our dataset, for example, more than ten, then the data is considered high dimensional.

When we talk about dimensionality, we are referring to the number of columns in our dataset assuming that we are working on a tidy and a clean dataset.

#PCA COLUMN SHARE HOW TO#

In this article, we will be looking at some of the useful techniques on how to reduce dimensionality in our datasets.